Canada’s New Capital Stack?

Canada’s Canada Strong Fund isn’t a traditional sovereign wealth fund built on surplus capital; it’s a hybrid model seeded with public debt. It aims to attract private and retail investment by lowering risk in sectors like energy and infrastructure. The key question is whether it can generate consistent returns while carrying that debt.

SpaceX Just Bought a Call Option on AI Coding

SpaceX has secured a structured option to acquire the AI coding platform Cursor for $60 billion, ensuring a massive $10 billion payout even if the deal falls through. This move integrates Cursor’s elite engineering interface with xAI’s Colossus supercluster, providing the compute power necessary to challenge OpenAI and Anthropic directly. Ultimately, the deal signals the end of independent AI "wrappers," as application-layer companies must now merge with massive compute providers to survive the cost of training frontier models.

The Allbirds AI Story

Allbirds’ transformation into “NewBird AI” is less a traditional corporate pivot and more a redefinition of what the underlying public asset represents, shifting from footwear brand to speculative AI infrastructure exposure. The market response, driven heavily by retail flows, reflects appetite for AI adjacency rather than any demonstrated capability in GPU-as-a-service or high-performance computing. What makes this case notable is not whether the new strategy succeeds, but how quickly narrative alignment with AI can reprice a public company independent of its operational history.

Mega-Rounds Are Back… But Narrower Than Ever

The current venture capital resurgence is not a broad market recovery, but a narrow concentration of capital into a small group of "inevitable" companies that control scarce resources like compute and infrastructure. While mega-rounds are returning, the middle market remains constrained as investors shift from funding broad exploration to reinforcing a few perceived winners. This evolution marks a fundamental change in venture capital, moving the industry toward a more selective, capital-intensive model that leaves less room for traditional experimentation.

SpaceX IPO

SpaceX is approaching a potential IPO that could become the largest in history, positioning it among the world’s most valuable companies and redefining the upper bound of venture-backed outcomes; the business has evolved beyond satellite launches to core infrastructure, with Starlink driving recurring revenue and anchoring its role across connectivity, defense, and emerging AI ecosystems, and if it goes public, the listing will likely reset how public markets value space and deep tech.

Why Your Best Employees Should Be Expensive

AI changes the old SaaS logic that more usage always equals better value, because with token-based pricing higher employee usage can raise costs while also driving much greater output. The argument is that top performers should be allowed, and even encouraged, to spend heavily on AI tools when that spend helps them ship more, solve problems faster, and create disproportionate value. The real metric should not be minimizing token spend, but measuring the return the company gets from that spend.

Capital Velocity and the New Compute Infrastructure Cycle

NScale’s recent funding round marks a pivotal transition as AI compute shifts from a venture-backed experiment into a mature, institutional infrastructure asset class. By attracting major private equity and hedge fund investors like Point72 and Citadel, the company is proving that GPU clusters now resemble high-value physical assets such as telecommunications networks or power plants. This influx of deep-pocketed capital allows these firms to remain private longer while rapidly scaling the massive, hardware-intensive environments required to power the next generation of artificial intelligence.

Anthropic’s “Supply Chain Risk” Label

The U.S. Department of War designated Anthropic a national security supply chain risk after negotiations over a $200M Pentagon contract collapsed. The dispute centered on Anthropic’s refusal to remove guardrails preventing its AI from being used for mass domestic surveillance or lethal autonomous weapons without human oversight, leading the Pentagon to move the contract to OpenAI. The episode highlights a growing tension between AI companies that want to control how their systems are used and governments that increasingly view frontier AI models as strategic infrastructure.

Cross-Commodity Swaps

The Watt-Bit Swap is a cross-commodity derivative designed to capture the "Watt-Bit Spread," which is the significant margin between the cost of electricity (Watts) and the market value of the AI compute (Bits) it produces. Much like the "spark spread" allows power plants to hedge the difference between natural gas costs and electricity prices, this swap allows data centers and utilities to link their financial outcomes. Instead of a data center paying a fixed electricity tariff, the payment is indexed to the market value of GPU rental rates. This alignment means that when AI compute is highly profitable, the power provider earns a higher return, and when the market dips, the data center’s power costs decrease, effectively sharing the operational risk and upside of the AI economy.

The Great Hiring Hiatus? Agents and OpenClaw

Agents like OpenClaw may reduce hiring pressure by absorbing recurring operational work that historically justified incremental headcount. Instead of expanding payroll, teams can scale variable token spend, allowing smaller groups to operate with higher leverage while delaying permanent hiring commitments. As this shift unfolds, leaders must balance cost efficiency with governance, deciding which responsibilities require human judgement and which can be formalized into agent workflows.

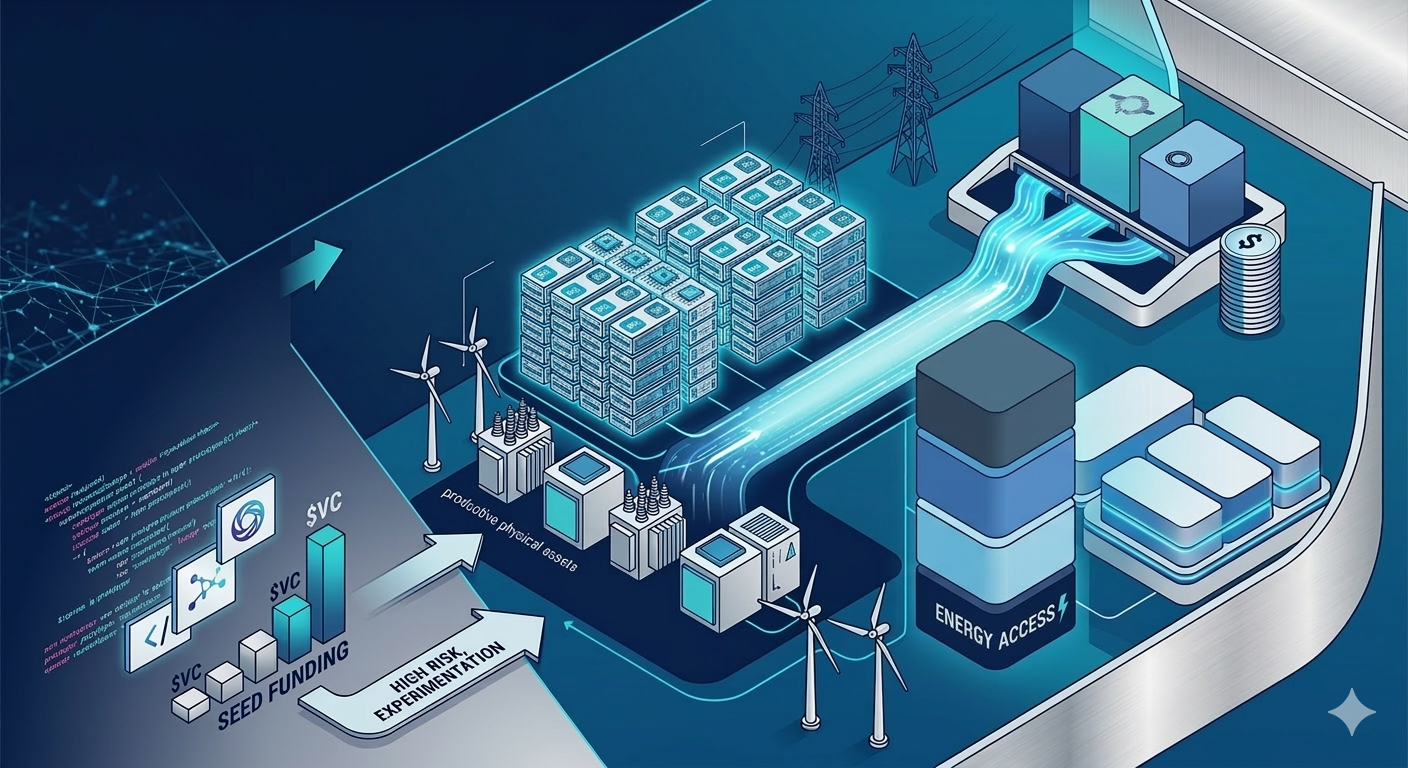

A New Venture Financing Model

There is a fundamental shift in venture capital from a "growth-at-all-costs" software model to a more disciplined, asset-heavy approach focused on sectors like AI infrastructure, energy, and defense. We argue that today’s most consequential companies are grounded in real-world physics and capital intensity, requiring investors to adopt the rigor of project finance to build durable value. Ultimately, venture is evolving by using sophisticated capital structures to underwrite growth where tangible assets and cash durability are the primary competitive advantages.